Click to Get Big Benefits

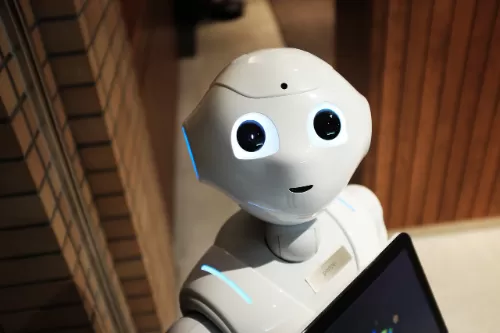

How Robots Detect Emotions

Robots lack consciousness, but they can be trained to identify emotional cues. Cameras analyze facial movements—a furrowed brow might be labeled "frustration," while a smile could signal "happiness." Microphones detect changes in speech patterns, like a trembling voice indicating sadness. Some robots even integrate wearable devices to track physical responses, such as sweating or increased pulse. These systems rely on vast datasets of human behavior, teaching robots to match inputs with predefined emotional categories. However, this process is purely mechanical: a robot doesn't "feel" sadness when it detects tears; it simply triggers a prewritten response, like offering a tissue or soothing words.

Why Emotional Robots Are Being Developed

The goal isn’t to create machines that experience emotions but to make technology more adaptive. In healthcare, robots with emotion-detection skills can adjust their interactions with patients—speaking softly to someone in distress or encouraging medication adherence. In education, tutoring robots might slow down explanations if a student seems confused. Customer service bots use sentiment analysis to escalate frustrated users to human agents. By mimicking empathy, robots aim to bridge gaps in industries where human resources are stretched thin. Yet this raises ethical questions: Can a machine’s scripted kindness ever replace genuine human care?

The Risks of Confusing Code with Compassion

While robots can simulate empathy, they cannot reciprocate it. A machine might "comfort" a grieving person by reciting condolences from a script, but it has no capacity to share sorrow or offer personal wisdom. This illusion of understanding could lead to overreliance on robots for emotional support, especially in vulnerable populations like isolated seniors or children. Critics warn that mistaking algorithmic responses for real empathy might erode human connections, making synthetic interactions a Band-Aid for societal loneliness.

The Line Between Tool and Companion

Robots are tools, not companions. Their value lies in consistency and efficiency, not emotional depth. For example, a therapy robot might remind someone to take medication or practice breathing exercises—tasks that require reliability, not genuine empathy. However, developers must avoid designs that anthropomorphize robots excessively, as this could mislead users into believing machines possess human-like intentions or feelings.

Conclusion

Robots may mimic empathy through sensors and scripts, but they remain sophisticated tools—not sentient beings. While their ability to detect emotions can enhance healthcare, education, and customer service, these machines lack the lived experience and reciprocal understanding that define genuine human connection. The real promise of "empathetic" robots lies not in replacing compassion, but in supporting it—freeing humans to focus on the irreplaceable warmth of personal care. As we integrate these technologies, we must safeguard a truth no algorithm can replicate: empathy's power stems not from flawless execution, but from the messy, heartfelt humanity that machines will never possess.

All News

Others

- Terms of use

- Privacy Policy